Computers, Ownership, Agency

Personal computers: the science-fiction-like machines of the modern world. Why we have them, what they offer, and why we give them up at our peril.

I was 3 years old—this would be in 2001—when I first used a personal computer. I grew up around them, and I was fascinated enough to grow up and work professionally on their software. As a kid, my time on the computer was spent in a number of ways: I played video games, I read interesting articles to research new topics, I watched intriguing or entertaining videos, and I stayed up way too late past bedtime to just talk with friends. All of these activities required one specific property of computers: communication. Computers not only can perform computations locally, they can also communicate information with other computers.

I grew up around computers—they were always there. I’ve spent my whole life playing around with these magic boxes, so appreciating just how magical and important they are—particularly because they can communicate—had a learning curve.

If you were to think of major inventions facilitating “communication” before the computer in human history, you might list a few important ones: the printing press, the radio, the telephone, or the television. Those were undoubtedly important, but recently I’ve become aware of the fact that the computer is the most revolutionary and important of all of these, particularly because it offers an entirely different class of communication—one that has brought about an age of unprecedented freedom and human advancement. Importantly, this position of the computer is in danger of being compromised. In this post, I want to focus on why the computer is as revolutionary as I claim, why it is being compromised as the revolutionary device that it is, and how we might go about solving the problem.

Few-To-Many Communication

The printing press, radio, and television were all unique in which medium they allowed communication through—the printing press allowed communication through written text; the radio allowed communication through audio; the television allowed communication through video and audio.

But all three of these revolutionary inventions have one detail in common: they facilitate few-to-many communication. For each invention, there is a very small number of information producers, and a very large number of information consumers. For this reason, these inventions offered an unprecedented amount of power, especially when considering the fact that almost all thinking in the human brain is done through idea mutation, not through from-scratch idea production. In other words, he who controlled information production is he who controlled the domain of conversation.

Furthermore, if you were to consider the rate at which new information enters the system at a large scale, it is rate-limited by however often new information enters the sphere of those doing the communicating, or however often existing information is mutated within that sphere.

Chokepoints

Given this information, the radical increase in dystopian fiction during the 20th century becomes less surprising. George Orwell’s 1984, Ray Bradbury’s Fahrenheit 451, or other such novels become slightly less fictional—and much easier to dream up—when you consider that almost all information was sourced from a small group.

The fundamental reason for why these stories become “less fictional” is that a system which offers only few-to-many communication mediums have a much smaller number of “chokepoints”. A bad actor—one who is corrupt, evil, or dishonest—now requires much less effort to control a population’s thoughts, feelings, conversations, and indeed broadly-accepted vocabulary, which serves as the building blocks by which humans form thoughts. That bad actor must now only control the chokepoints of the system, and if there are very few, then they have very few sources of information to control.

It’s easy to eyeroll this away. It’s made even easier by normalcy bias. It’s much easier, and more psychologically comfortable, to believe that society’s institutions and systems are built to run smoothly and efficiently. Surely, you must think, that these systems have been constructed to select for those most competent, least corrupt, and those with respect for individual freedom. Surely those with institutional power heard the same stories that we all did—those that told of too much power being granted to too few, the dangers of tyranny, and the corrupting nature of power. Surely we’ve—as a society—figured these problems out, at this point, and we’ve developed selection mechanisms that avoid all of these problems.

The Utopian Fantasy of Good Faith Normality

Unfortunately, none of these problems have been solved, and one reason begins with the interaction between few-to-many communicative mediums and democratic political systems.

The justification for direct democracy begins with a claim that rule by the majority is preferable to rule by the minority—rule by 90% of a population is better than rule by 10%; rule by 60% is better than 40%; rule by 51% is better than 49%.

Within such a system (or similar ones, like a constitutional republic), it should come to no surprise, then, that a few-to-many communication medium would be the best friend of he who looks to control populations. Controlling the chokepoints of information flow throughout the society also controls the parameters within which political conversations can occur. Which issues are important? What solutions are on the table? Who is a threat? What can we do to them?

The story goes: with such a system, in order to be elected into power, you must convince a majority of the ruled population that they ought to vote for you. The tyrant king is replaced by the benevolent politician, who is purely a representative for the people. Tyrants, the democracy advocate claims, cannot exist in the political sphere, because tyranny is unpopular.

The will to power, nevertheless, survives, as a constant within the human condition, and it sometimes manifests as the will to exert force over others to advance oneself. In other words, the psychological drive to tyrannize is not gone within a democratic system. A system which allows those with such a drive to hold power is not obviously better than a system that actively selects for them. So then the question remains: does democracy select for those without this tyrannical spirit? Is it a good selection mechanism for benevolent representatives of the people?

My questions are obviously rhetorical, and I won’t lead you on any further. The answer is a firm “no”—to both of those last questions—and it is partly due to control over chokepoints. He who has power, influence, and money also has the ability to exert force or extort those whom he wishes to control. And indeed, in the modern world, this manifests in both those with direct political authority, and those by whom they might be controlled: those operating the chokepoints. Only an idiotic tyrant would not use such levers to influence the chokepoints of information for their own gain. So, would we be so naïve as to have enough faith in democracy—or for that matter, any of our institutions—to ensure that no non-idiotic tyrants gained power, either through direct political authority, or that over chokepoints?

Unfortunately, we’ve apparently been precisely that naïve, or worse. You’ll regularly hear praise for “democracy”, and foolish statements like “it’s the worst political system, but it’s the best one we’ve got!”, which can only be uttered by those cowardly enough to approve of rule-by-majority with the hopes of being on the winning team, instead of questioning the idea of “rule” itself.

This system, along with levers of control that operate these aforementioned “chokepoints”, and finally with the constant in the human condition of the will to exercise force and tyrannize others, actively selects for tyranny. Ignorance, dishonesty, and corruption drive the tendency to think otherwise.

Computers To The Rescue

That’s a big problem, but fortunately enough we have computers, and computers are an instrumental part of solving such a problem, because they facilitate many-to-many communication.

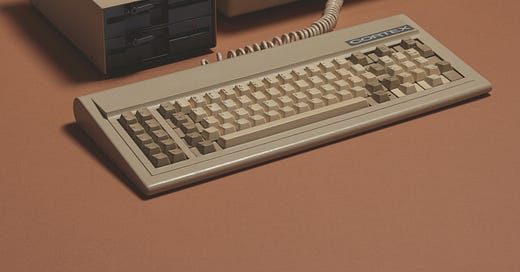

With the advent of the computer—particularly the personal home computer—the flow of information within society is no longer filtered through so few chokepoints. Every household wealthy enough to afford a home computer becomes its own chokepoint. And, luckily for everyone, rapid technological advancement throughout the late 20th and early 21st centuries has driven down the price of a home computer. This has made communication—even extremely unpopular communication—all but impossible to control.

For the most part, the entire set of these chokepoints cannot be easily externally controlled, because the sole authority to communicate information—both privately, and possibly to millions of people—lies purely within an individual’s ownership over their home computer.

Note: There are other factors as well, namely resting within an individual’s ability to protect their ownership—but that leaves the domain of computers, and so I’ll leave it out of this post (other than making this comment for completion’s sake).

So now, the importance of an individual’s ownership over their computer becomes clear. But it isn’t simply their ownership over the physical box of metal that is so important. The true importance lies in the individual’s ability to use their computer for the purpose of many-to-many communication. That ability begins with ownership over the physical metal box, but is continued by the entire technological stack that makes such communication possible. If that stack is not, in principle, under the control of the individual, then the ability to communicate using a computer is compromised.

Individualized control over the computing stack means, ultimately, decentralized control over the computing stack. If one popular service—one chokepoint—becomes externally controlled by a bad actor, then it is fully up to individual decision-making to build or use a different service (as opposed to a different service being an impossibility).

In modern political discourse—especially that connected to the world of computing—it’s very common to hear the term “freedom of speech”, or “freedom to listen”, and how those freedoms pertain to the Internet (the means by which many-to-many communication is facilitated). If you, the reader, are someone who does not find these freedoms important, then you are the tyrannical personality I spoke about earlier—I am not speaking to you. To the others who believe in these natural rights, and see them as a net-good for the human spirit, I write this to suggest that the bedrock of those freedoms—those natural rights—does not lie within the rights themselves, and certainly not in naïve trust that those rights will be protected by our institutions, but instead in the much older and more durable principle of ownership over the computing stack. Freedom of speech is an extension of the freedom to compute, which is simply one manifestation of property ownership.

The Modern Chokepoint: The Tech Giant

That brings me to what I perceive is perhaps the largest threat to the state of ownership in computing, and therefore to the freedoms computers have historically offered humanity. That threat comes from major modern technology corporations.

The computing ecosystem has undergone a massive process of centralization. The vast majority of computer users are on one of two major operating systems, they use one of three major browsers, and on their centrally-controlled operating system and centrally-controlled browsers, they use a number of services that are themselves also centrally-controlled.

This centralization has, in effect, produced a new set of chokepoints. The many-to-many communication I mentioned earlier is now only facilitated at the will of these corporations, and whoever is able to work together with them. Any communication they do facilitate will come with its share of hidden catches—you will need to provide data about your identity, what you do on a day-to-day basis, and you’ll need to submit to their rules (whatever they might be). These corporations are surely not too shy to use the power of the state to maintain their stranglehold on the ecosystem, and those with state power are surely not too shy to use these corporations to their benefit.

The Tech Giant’s Best Friend: Complexity

This raises an obvious question: why have these new chokepoints formed? What has made the centralization of the computing world possible?

To understand the answer to this question, you first must imagine what it might take to compete with these giant corporations on their own turf, and how that is distinct from how the computing world began. Why would it be such a seemingly impossible task to compete with, for example, Microsoft’s Windows, or Apple’s macOS? Why is the “year of the Linux desktop” seemingly never going to happen, and why does Linux still regularly fail on very common consumer machines, despite what religious Linux fanatics might tell you?

For one, the amount of software that is required to sufficiently compete in the consumer operating system space (and indeed many others) has become astronomically large. The fundamental pieces of an operating system have not become drastically more sophisticated than they were a few decades ago (and indeed, at that time, people wrote consumer-run operating systems very frequently). What has changed is the set of hardware combinations that a consumer operating system is required to support to be viable. Because this set is so large, and because communication between operating system and hardware device has become so detached from the hardware interfaces that are used to facilitate that communication (instead, those hardware interfaces act more like protocols), driver software has become a necessity.

A corporation like Microsoft is able to support a massive set of these hardware combinations out-of-the-box, because they’re able to leverage their position as the owner of the dominant consumer operating system. When a hardware company ships a device—like a GPU, or any USB device you can imagine—their first priority is writing driver software for Windows. Otherwise, virtually nobody can use their device. This means that an open operating system like Linux is constantly in a state of catch-up—Linux does not get driver software that works out-of-the-box on day 1 after a hardware device’s release.

This unfortunate situation has solidified both Windows and macOS as the dominant consumer operating systems, with Linux being a barely-viable third option.

Furthermore, it has also been partly responsible for a similar pattern of centralization occurring in the browser sphere. A friend once told me that the success of the browser is due to the failure of the operating system. This is true on many fronts, but most notably, the dominant operating systems failed to provide a sufficiently simple and user-friendly experience that offered secure sandboxed code execution, and software deliverability and installation (for both temporary and permanent software). The web, on the other hand, blows the operating systems out of the water on these fronts (even if it still doesn’t do a great job). But, in doing so, it introduced an entirely new document language, a styling language, and a scripting language. That means, in effect, each browser is an interpreter for that document language and styling language, and is also a JIT-compiler for that scripting language. It does this on top of the other requirements you’d expect, and as a result, a browser is a gigantic mess of complexity. It’s a software project that—without astronomical effort—can only already exist and evolve with changing standards, but not be truly produced from scratch.

You won’t find me praising web standards any time soon—from what I can tell, they are certainly not as well-designed and as simple as they could be—but nevertheless, at least part of the overwhelming complexity of the web is because of the operating system’s failure. The operating system’s failure is because of a lack of competition in operating systems. The lack of competition in operating systems is because of complexity in the hardware domain.

This complexity is, of course, not seen as a problem to these corporations—it is what disqualifies small, innovative teams from tearing the corporate, technologically-incompetent teams apart (the presence of monopolies or oligopolies held by these corporations also has explanatory power for the state of their products’ software quality, which is by nearly any metric very poor). Their competitive edge is the massive disparity in resources, and how they’ve used that disparity to further solidify their position. To everyone else, this complexity should be seen as a very worrying problem.

A World Without This Problem

Now I raise the question: if this were not a problem, what would the world look like?

In such a world, it’d be very easy to compete with Windows. The web’s equivalent would not require Chrome’s complexity, and so it’d also be very easy to compete with Chrome’s equivalent. The computing world—from the hardware, to the highest level software—would be designed such that writing an operating system was very easy, instead of very hard. Hardware wouldn’t be a nest of complex combinations requiring an insurmountable amount of driver software, and instead it would allow developers to make assumptions, and write code directly for the hardware. Hardware would be mostly fixed in-place, which is realistically all that is necessary for the vast majority of consumer use.

This world would have a developer population that wasn’t unnecessarily allergic to rewriting things from scratch. The average developer would not be comfortable trusting absurdly complex black boxes produced by major corporations in order to control and dominate the industry, because the average developer would know what simplicity and replaceability would mean for technical quality, and also for freedom and preservation of individual rights.

Closing Thoughts

I’m interested in building that world. I think doing so is possible, but will surely be difficult. It will require innovation in the hardware space, and it will require developers who don’t shy away from understanding the fundamental reality of their problem. Those developers must understand hardware, the operating system, and the innermost details of the browser and the web server. And finally, those developers must be clever entrepreneurs, who can find a way to get their foot in the door of the market, and eventually drive the computing world to one with simpler hardware, simpler software, and an allergy to complexity and centralization.

This is a future worth pursuing. There will surely be a lot of money to be made. There will surely be a number of important technological advancements and innovations to discover. But most importantly, there will be freedom—freedom to speak, freedom to listen, freedom to compute, and freedom to own.

If you enjoyed this post, please consider subscribing. Thanks for reading.

-Ryan

To compete with Apple and Microsoft you'd have to do what Apple does. Build your own hardware.

This was an amazing article, I was planning on writing something on the centralizing effect of unnecessary complexity in software, but I doubt I could write half as good as this. Some projects I've come across that give me hope on fixing this are: George Hotz's tinygrad, the QBE compiler backend and Rasmus Anderssen's Playbit project.

Looking really far out, I believe the barriers to entry in building custom hardware is reducing with the creation of ergonomic HDLs like Amaranth, better tooling like Andreas Oloffson's Silicon Compiler Project (https://github.com/siliconcompiler/siliconcompiler/) creating custom chips is becoming easier and easier, I feel the last major barrier is reducing the cost of chip fabrication. But this is just very far out stuff I hope comes to fruition.

I'd love to subscribe to your substack but I'm broke Nigerian undergrad 😅.