Regulation Cannot Save A Defeated Culture

On markets, regulatory capture, Big Tech, and tangibly improving the software world.

Almost universally, people consider Big Tech responsible for numerous problems, and understandably so. Others have iterated these problems ad nauseum: exploitative and surveillance-driven business models, lack of respect for end-users, embarrassingly-terrible and complex software upon which entire industries rely, and quiet encroachment upon ownership.

A world with Big Tech’s continued success and oligopolization seems like it’s from Brave New World. In this world, computers become hamster wheels, rather than bicycles for the mind. The days of computing freedom are gone—developers build devices and software for dopaminergic activity in service of advertisement and telemetry. A curious kid can’t dig into code, and build his own video game—that would take far too much time away from revenue-generating activity. The individual is, effectually, enslaved. The fire of the human spirit is extinguished. Life is reduced to servitude as one part in a superorganism-like machine. The individual’s ability to express and explore creative ideas, criticize those exercising arbitrary power, and generally flourish is further diminished. Any semblance of meaning evaporates. The frog becomes boiled.

This world is surely a worst-case scenario, meant to illustrate an extreme—but this extreme is one that many agree the computing world is moving toward.

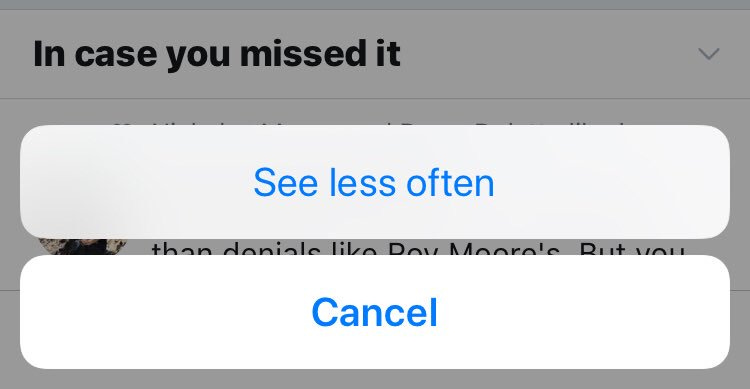

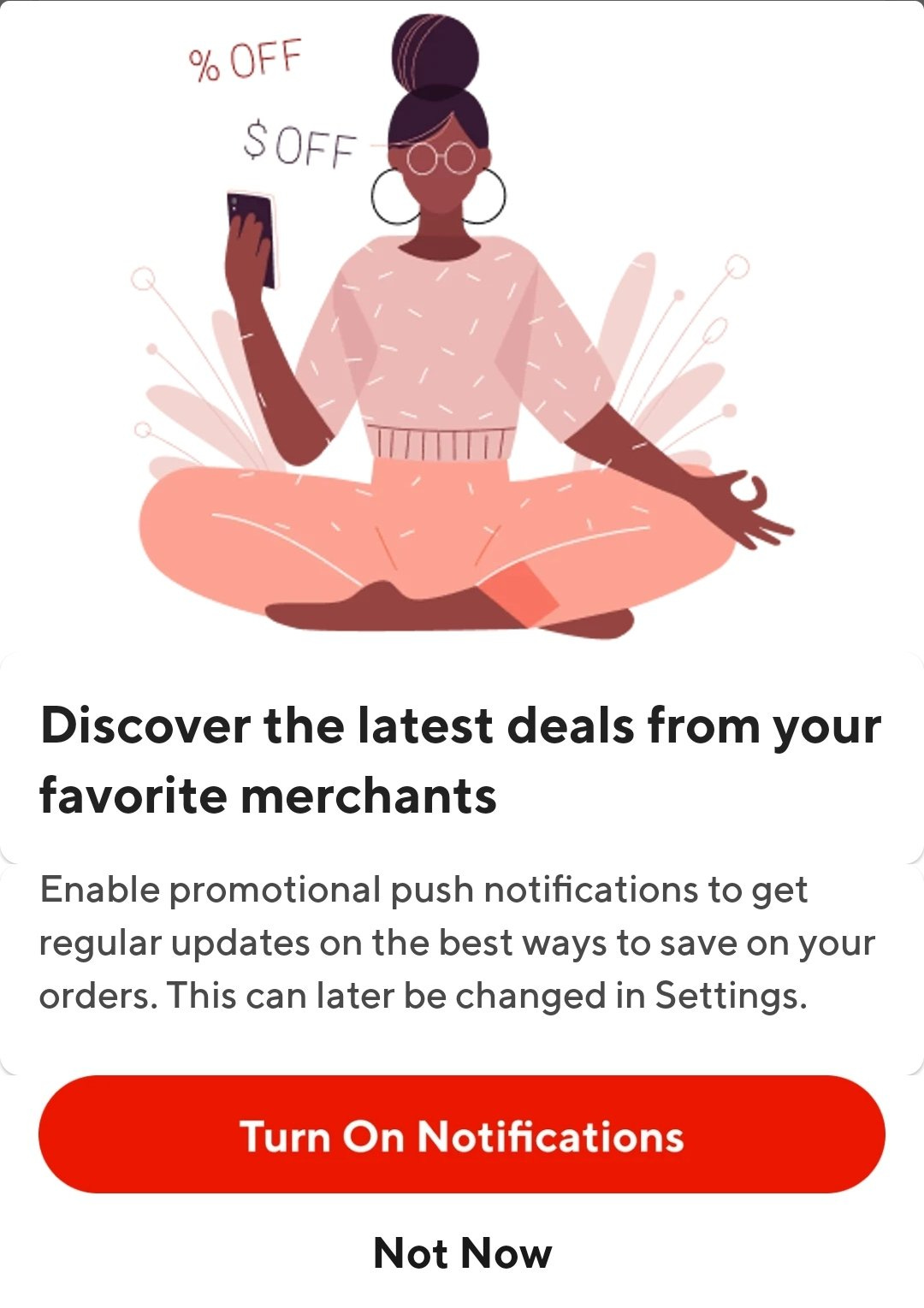

Technology companies do little to disguise their capitalization of this situation. They embed their condescension and contempt for their users’ agency and choice in everything they do, from a lack of care in their products, to their policies, to their decision to arrest user attention with irrelevant popups and notifications, to their tendency to ship “services” when they actually ship products, down to their language:

Even the preference for the word “user” over “customer”—which everyone has now adopted as common parlance—is in some cases disturbing.

They act as arms of government in implementing “misinformation” policies, because they see themselves (or at least government) as the arbiters of truth—the peasants are incapable of processing information.

As the saying goes, the road to Hell is paved with good intentions. Many within these organizations might repeat the mantra that they’re contributing value to the world, and upholding wonderful-sounding principles like “truth”, “democracy”, and “justice”. But, because they’re human—and thus subject to the human condition—these terms are just proxies for “what I like”. In powerful positions, their actions turn from foolishly arrogant and condescending to downright psychopathic.

More existentially disturbing than condescension and contempt is their interest in the divorcing of users from reality—it’s easy to notice their recent obsession with “the Metaverse”. They don’t intend to provide mechanisms by which people may flourish in day-to-day life—they intend to replace day-to-day life. This might be because day-to-day life—the real world—is where technology corporations operate, and where they might be dislodged. They’d prefer to control the substrate of what users consider reality, so that a world without them is so incomprehensible that it seems absurd on its face.

I confess that I find all current conceptions of “the Metaverse” a fairly laughable approximation of Nozick’s “experience machine”—especially when considering the usual Big Tech programming teams putting it together—but that is not to say many iterations of the idea are not disturbing, nor is it to say that a workable implementation of such technology would not gain traction. In any case, it serves as a useful window into the heart of these corporations.

It isn’t surprising that those, like myself, who are interested in computing as a tool for individual flourishing—for creative expression, scientific endeavor, freedom to speak, freedom to learn, freedom to compute, and freedom to associate—are disturbed by the behavior of large technology corporations. That includes those merely interested in software quality who might otherwise prefer to stay apolitical.

The obvious question then arises: why do these corporations operate in this way, and if it is a problem, what is there to do about it?

While it may be tempting to employ Hanlon’s razor as an explanation, I reject it. Given that there exists some well-meaning stupidity, it doesn’t follow that there does not exist malice. And in any case, the distinction between malice and stupidity is blurrier than the rule of thumb suggests—the decision to naïvely exercise arbitrary power given stupidity is both functionally and morally equivalent to malice.

Today, it’s overwhelmingly popular to explain this situation as but another example of “capitalist exploitation”. The problem, as claimed, is with “capitalism” itself—the principles of private property ownership and voluntary exchange—and with “corporate greed”, and so the solution must be involuntary regulation by force. The story—downloaded to every public school student’s mind through their high school civics class—goes: the free market run amok cannot be trusted, and it has historically required regulation to “reel it in”.

And, really, this is perfectly reasonable. After all, the private sector is comprised only of greedy corporate actors. The public sector is just, well, the public! Washington, D.C. is just you and me! Greed does not exist in the public sector—and even if there was any greed in the public sector, the government has been carefully designed with “checks and balances”, and it is “safeguarded with democracy”… Uh, yes, I understand that members of all branches of the federal government all attend the same dinner parties, shake hands and share drinks with their “opponents” off-camera, trade employees with big corporations, and they all seem to get extravagantly rich during every crisis despite having relatively modest salaries… But… never mind all of that—the checks and balances work, and the multi-million dollar mansions are just from their charities and best-selling books! So therefore, the solution must be state regulatory enforcement.

In this imaginary world, Big Tech companies—many of which, as already discussed, are predatory and exploitative by nature—will simply be forced to act more ethically against their nature. And when they inevitably attempt exploitation through means not prevented by regulation, that is simply a call for more regulation to cover more surface area!

This perspective is often argued in spite of the fact that the modern Western economy—in which Big Tech corporations have evolved—does not closely resemble a laissez faire capitalist, free market economy—there are major regulatory forces at work even in relatively unregulated sectors. Companies have had the privilege of artificially-free credit, bailouts, and a lack of consequences for unprofitability. So to associate the problem with “capitalism” is on its face absurd, but it’s an explanation which is nevertheless remarkably popular.

The brainwashing goes beyond diagnosis and mere suggestion of state force—it also promises that exercising the state with regulatory force is fulfilling, productive human action which improves the world. It is a mechanism by which one can make a difference, and leave the world a bit better than they found it. It’s a view which overlooks a glaring paradox. Man was not born into the world with computers, and operating systems, and software (in fact, he wasn’t born with clothing, food, housing, and a great number of other such things)—he had to productively act in transforming natural resources into those products. If the software world is to be improved, it also requires productive human action; someone, somewhere, must transform “natural resources” (or resources which are less processed) into that faster, simpler, higher quality, more ethical software. The regulation-proponent never addresses that use of regulatory force is anti-production by definition—particularly anti-production-which-is-seen-as-bad—and furthermore how anti-productive behavior can ever be contorted to instead be productive. Software cannot be legislated into existence. Just like hardware, mining equipment, processed raw materials, clean water, and food, it must be produced.

A closer examination of the political system, regulatory history, and the human soul reveals the true nature of this instinct-to-regulate—and perhaps why public education curriculum was designed to produce this instinct. Regulatory measures are the corporation’s grenades. They are not the public enforcing its interest—whatever “the public” means, and whatever “the public interest” means—on predatory third parties; they are the predatory third parties waging war on one another. They are the struggle for power and domination—the consumer is collateral damage. Behind every regulatory measure aimed at a corporation is another corporation looking for a competitive edge.

And regulation doesn’t provide competitive edge in the traditional sense—one corporation is not producing higher quality products more efficiently, more cheaply, or more creatively, nor are they expanding into a new market. It’s the sort of “competitive edge” which comes from paying the local mafia to hold opposition at gunpoint. Through regulation, the less productive gain an edge over the more productive. Microsoft, or Apple, or Google might lose on day one—and good riddance—but someone else wins, and they win for the wrong reasons, because there has been no net-gain of productive human action. Exploitative, surveilling software—which we’ve all grown to despise—is replaced by software which was incapable (for one reason or another) of winning in the market.

The cycle will then continue—Microsoft, Apple, and Google won’t go down without a fight, and they have plenty of capital to spend on lobbying their own measures. The industry will slow to a halt, burdened by regulatory capture. It will become a shell of its former self—focused more on navigating regulatory mazes than producing good things. The consumer ultimately loses, because without productive human action, the world strays slightly toward its original condition once more—one without computers or software at all, never mind good or useful software.

The essence of civilization is productive human action which transforms the natural world. A regression to a less productive population is a regression to a less civilized world. This remains the case irrespective of the number of magic scrolls that legislature chooses to write. Technological progress, in this case the computer, was painstakingly made for very good reasons, and killing the engine which produced it—and continues to maintain it—would be a grave error.

Regulation, used as a tool against the Big Tech oligopoly, will therefore leave us in worse world—one of even-more-heavily weaponized legal teams, and competition through force rather than through productivity.

One might claim that regulation cannot purely be the wrong tool. It may have issues, but in some cases, it must be appropriate; after all, nothing is black and white. Responsible thinkers have nuanced perspectives, and being strictly opposed to regulation is not nuanced. Regulation might be used to advantage a well-meaning and honest competitor—one perhaps undiscovered by the market, but not necessarily failing in the market. That competitor may have the purest of intentions, and may be building a solid, ethical product.

But I’d posit that the use of regulation in such a case will, at best, temporarily solidify a local maximum of industrial progress. It may cause short-term improvements, but not without the sacrifice of long-term advancements. This competitor’s victory is both temporary and hollow, and with that victory, the state is granted a stronger ability to choose winners and losers. Obtaining control of the state’s sharpened sword then becomes the primary goal of each participant in the market vying for dominance. The originally-honest competitor—strengthened by lack of competition due to regulation in its favor—inevitably transforms into yet another exploitative party, acting identically to its predecessors. No real production occurred; no real technical problems solved. Software remains in identical, and eventually worse, shape than before.

In my estimation, one of the following two explanations is true: it is either the nature of large technology corporations, operating in the absence of competitive forces, which leads humanity closer to this extreme—or it is the weakness of culture which fails to prevent them from doing so. In either case, the solution is a reformed culture which is productive by nature, and not defeated by nature.

The software world I hope to help build is different than that which exists today, and that which Big Tech corporations have no problem driving us toward. This would be a world with widespread competition, simple and rewritable building blocks at the core of the ecosystem, simple and open protocols for networked communication, simple and open file formats for the most important information, a high value for user experience and design, and the impossibility of extinguishing computing freedom.

Building such a world—or even a closer approximation to that world—requires research, social organization, entrepreneurship, marketing, and engineering. All of these are examples of productive human action.

I don’t think I’m alone in preferring this world. And if it truly is a better world, then it does not require force—it only requires free choice. But users can’t choose a world that doesn’t yet exist, nor can legislature write a magic scroll which suddenly makes this world exist. This world must be built with productive human action. It must be carefully crafted to preserve important designs and principles. And it must be well-marketed to the wider public, so that they might have a full picture of their choices.

I write this post to oppose hollow victory as a means to solve the problems in the modern software landscape—hollow victory in attempting to build this world. A culture which desires hollow victory—a kid who just lost a fight, and needs his dad to step in—is not a culture capable of productively evolving the software landscape in this direction.

I’m no friend of giants who currently dominate the industry, nor am I a fanatic for the modern software ecosystem. But I prefer a fulfilling victory with real outcomes—a victory which is hard fought and earned.

If you enjoyed this post, please consider subscribing. Thanks for reading.

-Ryan

Hi Ryan, i have a lot of respect for you as a programmer and i agree with a lot of the problems you describe in the first paragraphs. But i‘m always a bit perplexed by the solutions you propose in order to solve these problems. I don‘t think it makes much sense to argue against your anarcho-capitalistic leanings, because that would just take way to long. I try to argue against what are the most obvious faults in your arguments. Also, because you don‘t seem to like the word capitalism, i will be using ether real existing capitalism or state-capitalism to describe the kind of capitalism we have today.

>The regulation-proponent never addresses that use of regulatory force is anti-production by definition.

This is somewhat of a circular argument: Regulation is anti-production because the inhibits companies from producing in a certain way. The thing missing here is that there are other forces like monopolistic practices and anti-competitive behaviour that hinders productivity in a economy. I know of course that in a ancap world those problems would just magically disappear, but in current real existing capitalism, if regulation can limit the extend to how these anti-productive activities harm the economy, regulation itself can increase productivity.

A perfect example would be the one of the reason you wrote this article: The Apple store monopoly. Because Apple has a monopoly on there operating system and its app store, it can force developers to charge whatever there want as their commission when purchasing an app from there store. The OS does not allow other alternative stores, eliminating any competition.The solution: forcing apple do abandon this anti-competitive practice by allowing other stores to be installed on there OS. It‘s really hard to see how this is not good for app-developers.

>Behind every regulatory measure aimed at a corporation is another corporation looking for a competitive edge.

I think this sentiment comes from the thinking that every state is corrupt and if the state regulates the market it does so because it is bribed by a company or a specific business sector to do so, giving that company or business sector the advantage in a otherwise perfectly balanced free market. While i agree that most regulation in a state-capitalistic system are motivated by that dynamic, it does not mean that it always must be the case. A state is at least potentially democratic. If a regulatory measure is motivated by democratic forces, it can lead to a juster and more productive economy.

>The software world I hope to help build is different than that which exists today, and that which Big Tech corporations have no problem driving us toward. This would be a world with widespread competition, simple and rewritable building blocks at the core of the ecosystem, simple and open protocols for networked communication, simple and open file formats for the most important information, a high value for user experience and design, and the impossibility of extinguishing computing freedom.

It‘s hard for me to see how in a world of Big Tech, be it in a state-capitalist economy or in an anarcho-capitalist world, even if we had these simple and rewritable building blocks, file formats etc. such large companies could not just use there monopoly and influence to change these building blocks, file formats etc. to give them a market-advantage and to solidify there monopoly like Microsoft, Apple and Google have always done. Essentially leaving us where we are. Even if the playing field is initially leveled there are inherent dynamics in market based economy that help create these monopolies without any state intervention: Network effects, economy of scale, lock-in effect to name a few.

>Man was not born into the world with computers, and operating systems, and software (in fact, he wasn’t born with clothing, food, housing, and a great number of other such things)—he had to productively act in transforming natural resources into those products.

This is an interesting point to bring up since almost every original design of those components that make a computer today have been developed by publicly funded institutions, away from competition and full of people who did the work they did out of pure interest and love for what they do. I say this is because of the common idea that invention can only happen in a competitive free-market environment while historically the private sector has done very little for technological achievement's in terms of ground work and heavy lifting compared to the public sector for which you seem to have so much disdain of. As a matter of fact, even after the invention of computers and telecommunication the private sector ignored those technologies for decades because it didn‘t see any way to generate short term profit form it.

This attack on regulation and the alternatives/solution you offer strike me as simplistic. It's equivalent in spirit to saying,

"The rule of law is too restrictive. We should remove all laws and then people should just not murder eachother and stop stealing. Let's all be nice please."

People will probably always steal and always murder, unless maybe we live in a post scarcity world, and even then it will continue to happen.

And the same applies to companies. Left unchecked, they will always try to maximize their profits and gain market advantages. And market advantages are not productive. In fact they destroy productivity as it's harder to make any progress without the monopoly hand slapping you away. It's not a plot, it's not a conspiracy, it's not that these people are evil (well some are) - it's just that the incentives are there pushing them towards this way of doing business. They'll prioritize growth to satisfy shareholders.

Unregulated companies do uncompetitive things and hinder the productiveness of entire sectors.

I'm not in favour of extreme regulation, but saying that all regulation is useless let's just be nice to each other and make better software is really unconvincing to me.